Accounting for the “Costs of Inaction” in Cost-Benefit Analysis

Inaction isn't so much a cost as a source of continued problems

Caleb Watney of the Institute for Progress has put together an impressive list of projects he’d like to see researchers working on related to innovation. The list of ideas apparently emerged out of conversations that took place at a recent NBER innovation bootcamp training session.

Many of the items on the list are on topics related to the institutions of science, patents, and intellectual property. These are all important, to be sure, but one of the listed ideas in particular stuck out to me, which was:

Are there ways we can reliably incorporate the costs of *inaction* into government decision-making and cost-benefit analysis?

This is a topic I have thought and written a fair amount about, so I thought I would chime in and offer a few of my thoughts, as well as recommend some possible directions research in this area could go in.

Cost of Inaction or Business as Usual?

My initial reaction upon seeing the topic is that it is not quite being framed in the right way. By this I mean, thinking of inaction as a “cost” can be somewhat misleading. Caleb gives the example of the FDA holding up drug approvals or the National Environmental Policy Act holding up infrastructure projects during environmental reviews. It’s easy to see how these policies produce large costs to society, relative to a world where the policies don’t exist. However, because these policies do exist, from a cost-benefit standpoint, it’s better to think about them creating problems. Here’s what I mean by that:

In an economic analysis, costs are measured relative to a “baseline scenario.” The baseline scenario captures what the world would look like in absence of any particular policy action or change. The baseline represents the “business as usual” setting. In crude terms, it’s what would happen if we just “did nothing” and let things proceed on the course we are currently on.

It’s easy to see how the “costs of inaction” that Watney is concerned about are related to this baseline scenario in a cost-benefit analysis. Importantly, however, there are no costs in the baseline scenario. Rather, costs and benefits are measured relative to the baseline scenario. The costs of “inaction” in a typical CBA are therefore nothing. We would need some kind of behavior change in order to induce a cost. A CBA that tries to count inaction as a cost is not being conducted correctly.

That said, there are constructive ways in which we can try to get at the issue Watney is concerned about. For example, I suspect a lot of people would be uncomfortable with the idea there are no costs associated with climate change, just because they are baked into the baseline scenario. Technically, this would be the right way to think about climate change from a cost-benefit standpoint, but it conflicts with our moral intuitions.

So instead we can think about what the world would look like in absence of climate change, or in absence of FDA and NEPA reviews, and then evaluate costs of the present situation relative to that world. That is, what we are doing is we are changing the baseline scenario and evaluating the status quo situation relative to a more superior alternative. Any inadequacies we identify can be counted as “costs” in that cost-benefit analysis.

These cost estimates can’t just be thrown into a run-of-the-mill cost-benefit analysis evaluating a project or regulation, however, because those CBAs will be evaluated relative to a different baseline. Nevertheless, cost estimates of this kind can still prove useful, especially in benefits analysis, as I discuss in more detail below.

This may all sound complicated, but it’s just that this evaluation doesn’t really belong in the cost section of a cost-benefit analysis. Rather, it belongs in an analysis outlining the problem a regulation or other policy is addressing. Sometimes this is referred to as “market failure analysis.” The relevant problem can also be a “government failure,” as in the case of FDA or NEPA creating problems. I prefer the term “problem analysis” and here’s how it works.

What’s the Problem?

My late, former Mercatus Center colleague Jerry Ellig went to great lengths to review dozens of the regulatory impact analyses federal agencies produce alongside their regulations. This was part of a project called the Regulatory Report Card, which I helped Jerry manage for several years in the early 2010s. Jerry showed empirically that a detailed analysis of the problem a regulation is addressing is often a missing from agency economic analysis. Too often, agencies just jump into an analysis of costs and benefits, more or less assuming that a problem exists.

Jerry and I wrote several reports together about the importance of defining and understanding the problem a regulation is addressing. A complete problem analysis should include several elements:

A description of the problem being addressed

Evidence the problem is real and systemic, rather than anecdotal

A projection of how the problem is expected to evolve over time, including whether it is getting better or worse

A description of what is causing the problem, since solutions should address root causes and not symptoms

A theory about how various solutions will work to mitigate the problem

Jerry and a team of economists (including me) graded agencies on the extent to which they included analysis of the problem in their regulatory analysis. Unfortunately, it is rare to see robust market failure analysis from federal agencies. Instead, it is common for agencies to speculate about an externality or other market failure that might exist, without offering any real evidence. Or they simply state Congress made them issue a regulation, and that’s why the agency is moving forward with it. Sometimes the issue is overlooked altogether.

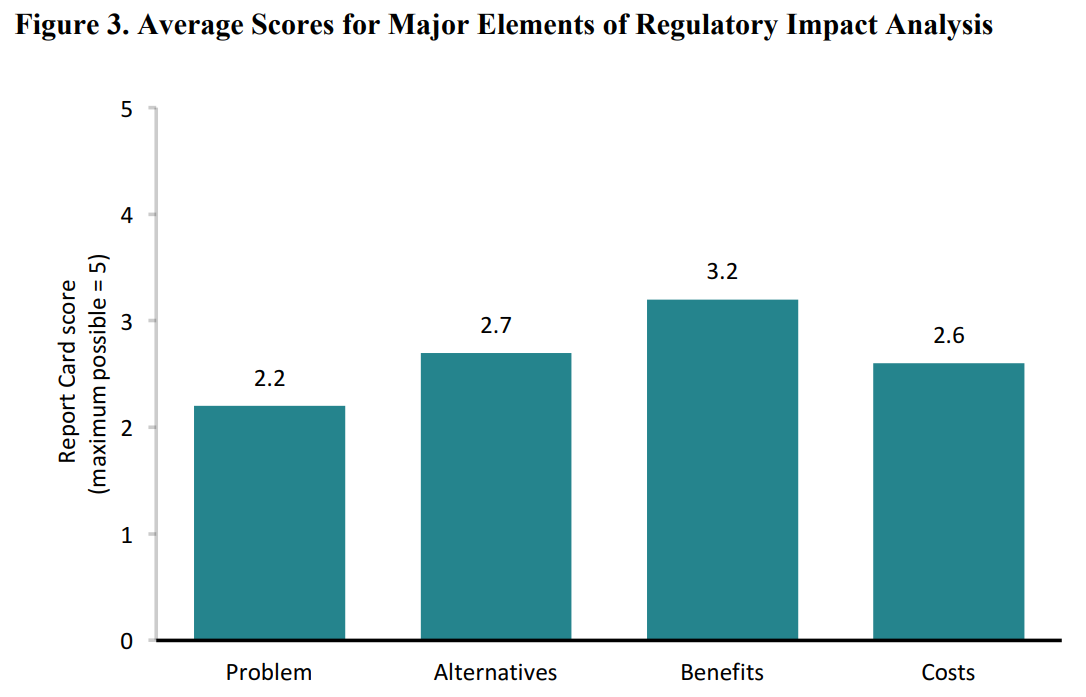

Jerry and his team showed that analysis of the problem is one of the weakest components of regulatory analysis. That’s according to the 5-point scale used in the Report Card project. By comparison, analysis of alternatives to a regulation being proposed, and analysis of benefits and costs scored higher. See the figure below.

If a regulatory agency doesn’t have a strong understanding of the problem it is addressing, it is unlikely the agency will design solutions that are well-tailored to solving the particular problem (if one even exists). I can think of a few reasons why more problem analysis is not being conducted by federal agencies.

It is hard to do. This is especially true when making projections about how a problem evolves in the future. Even projections a year or two out can be difficult when there isn’t much data available.

Policymakers don’t want to be held accountable. If agencies say they expect a problem to look a certain way a few years out, and this doesn’t end up happening, they look bad.

By the time economists get involved, a decision has usually been made that the agency is moving forward with a regulation. Economists see the problem analysis as unnecessary at that point.

We Can Do Better

Fortunately, we have examples of what problem analysis can look like. One example comes from a 2017 Council of Economic Advisers report about the costs of the opioid crisis. The CEA estimated that in 2015 alone, the economic cost of the opioid crisis was valued at just over $500 billion, or about 2.8 percent of GDP. This was primarily due to opioid overdose deaths.

This to me looks like the kind of “cost analysis” that Watney probably would like to see, except, recall, the “cost” as estimated in this report is a cost relative to a world where there is no problem at all, i.e., where none of the reported 33,000 opioid-related deaths in 2015 took place. We can’t just pop that $500 billion figure into a cost-benefit analysis of a regulation addressing opioid addiction, because the regulation will be evaluated in the context of a world where those deaths are already occurring each year (i.e., they are in the baseline).

However, the problem analysis could still prove useful in this kind of CBA. That’s because, despite these “costs” being built into the current baseline, estimating them is a good first step towards estimating benefits of a regulation addressing the problem. In other words, agencies can draw from information in the problem analysis when they propose policy solutions that help mitigate the problem, in this case by reducing opioid-related deaths.

Much like an untapped oil reservoir underground, the $500 billion in economic losses annually from opioid overdoses represents a pool of untapped benefits that policies could deliver. Each policy that makes improvements towards mitigating the problem will tap into those benefits to a marginal extent. In this way, highlighting a problem and valuing it in monetary terms gives an incentive to agencies to do something about the problem, because they can claim credit later on for achieving significant benefits.

Conclusion

If we want to see certain issues receive more attention in a cost-benefit analysis, we need to first frame the issues properly. In this case, it’s better to think about “costs of inaction” in terms of a problem that exists that is in need of a solution, as opposed to thinking about an additional cost that should be tallied on the cost side of the ledger in a CBA.

If we want agencies to do something about specific problems, it is also important for them to see it in their interests to do something. Regulators have traditionally been hesitant to carefully evaluate the problems their regulations are addressing, likely because they don’t want to be more transparent and they don’t want to be held accountable when their policies don’t accomplish their goals.

Having regulatory agencies produce detailed reports about the nature and economic magnitude of various problems society faces—separate from a traditional cost-benefit analysis—can help address this issue by providing agencies with an incentive to do a better job. Problem analysis of this kind is potentially extremely valuable because agencies can later claim benefits of their future actions relying on information from these reports. Currently, very little benefits analysis is even conducted in the federal government, so generating more problem analysis could be a good first step towards more and better estimates of benefits.

My recommendation to the Institute for Progress would be to explore what good problem analysis looks like, and maybe even to conduct a few original analyses themselves. These could offer examples to regulators and even be used in the production of benefits estimates later. I’d be happy to be involved in projects along these lines. If the folks at the Institute for Progress have any interest, they should let me know.